Google's New AI Tech Cuts Memory Use by 6x

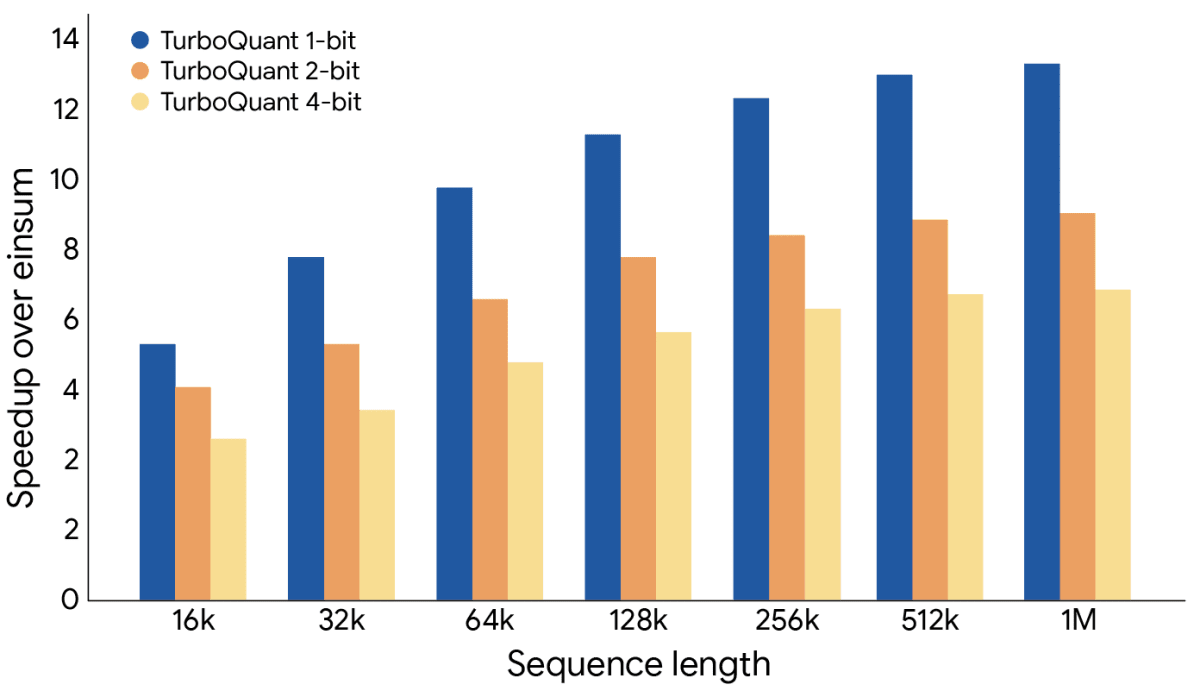

Google Research just unveiled TurboQuant, a breakthrough algorithm that makes AI models six times more memory-efficient while running eight times faster. This could finally bring powerful AI to your smartphone without draining your battery or compromising your privacy.

Your phone might soon run AI as powerful as ChatGPT without sending your data to the cloud, thanks to a clever mathematical breakthrough from Google.

Google Research just revealed TurboQuant, a compression algorithm that shrinks the memory footprint of large language models by six times while actually making them faster. Even better, the AI doesn't lose any accuracy in the process.

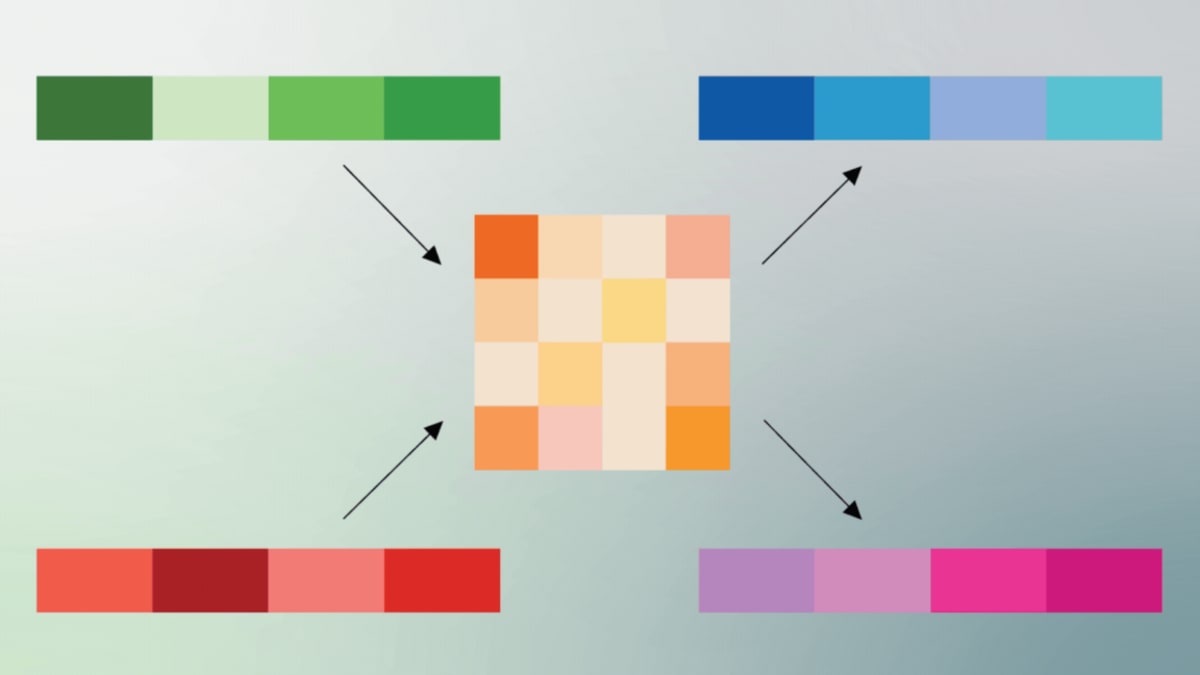

The secret lies in how TurboQuant stores information. Traditional AI models use coordinates like "go 3 blocks East, 4 blocks North" to describe data. TurboQuant converts this into polar coordinates, simplifying it to "go 5 blocks at 37 degrees."

This polar coordinate system, called PolarQuant, acts like efficient shorthand. It reduces each piece of information to just two elements: a radius showing data strength and a direction showing meaning.

The second step smooths out any compression errors using something called Quantized Johnson-Lindenstrauss. Think of it as a final polish that preserves all the important relationships between data points while using just one bit of information per vector.

Google tested TurboQuant on popular AI models like Gemma and Mistral. The results were remarkable: perfect accuracy across all benchmarks while using six times less memory. The algorithm can compress existing models down to just 3 bits without any additional training.

The Ripple Effect

This breakthrough could transform how we use AI in everyday life. Smartphones have limited memory and battery power, which is why most AI features currently send your questions to distant data centers for processing.

TurboQuant changes that equation. With models six times smaller and eight times faster, your phone could handle complex AI tasks locally. That means better privacy, faster responses, and no internet connection required.

The technology works on existing AI models right now, no retraining needed. Companies could immediately start offering more sophisticated features on devices people already own.

Medical professionals in remote areas could access diagnostic AI without reliable internet. Students could get AI tutoring help even in areas with poor connectivity. Emergency responders could use AI analysis tools that work anywhere.

Of course, tech companies might also use this freed-up memory to build even more complex models. But even that scenario creates a win: either we get more efficient AI on current hardware, or we get more powerful AI on future devices.

The breakthrough matters most for mobile technology. Desktop computers and cloud servers can always add more memory, but smartphones face hard physical limits. TurboQuant works within those constraints to deliver better results.

Google's research proves we can make AI both smaller and smarter at the same time.

More Images

Based on reporting by Ars Technica

This story was written by BrightWire based on verified news reports.

Spread the positivity!

Share this good news with someone who needs it