MIT Creates AI Debiasing Tool That Actually Works

Researchers at MIT have solved a major problem in artificial intelligence: removing bias without accidentally creating more. Their new technique, called WRING, helps AI systems make fairer decisions in critical areas like medical diagnosis.

Imagine an AI tool that helps doctors spot skin cancer, but only works well on certain skin tones. For patients with darker skin, a biased model could miss a life-threatening diagnosis.

Researchers at MIT, Worcester Polytechnic Institute, and Google just cracked a puzzle that's stumped AI scientists for years. They created a technique called WRING (Weighted Rotational DebiasING) that removes bias from AI vision models without creating new problems.

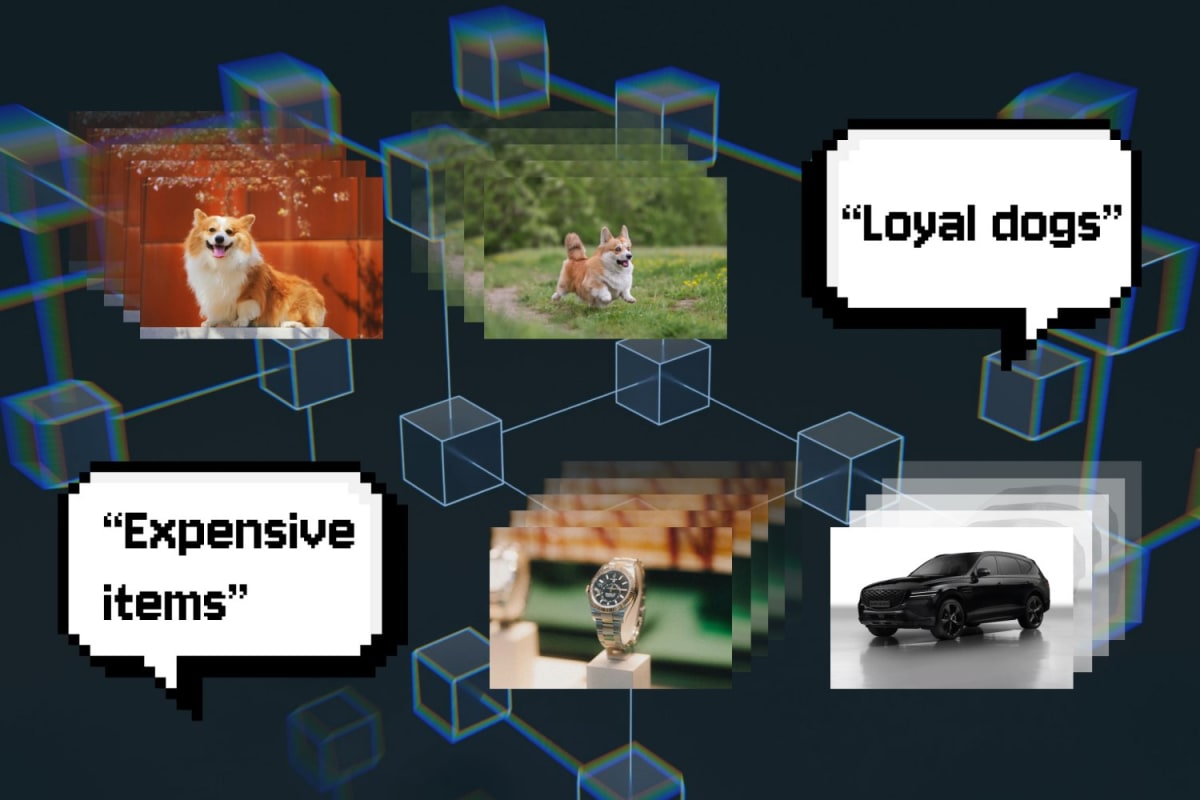

The challenge has always been what scientists call the "Whac-A-Mole dilemma." When researchers tried to fix one type of bias using existing methods, they accidentally amplified others. Remove racial bias from a medical imaging system, and suddenly it becomes more biased about gender.

Walter Gerych, the study's lead author and now a professor at Worcester Polytechnic Institute, explains the old approach squished all the AI's learned relationships together when trying to cut out bias. Everything changed in unpredictable ways.

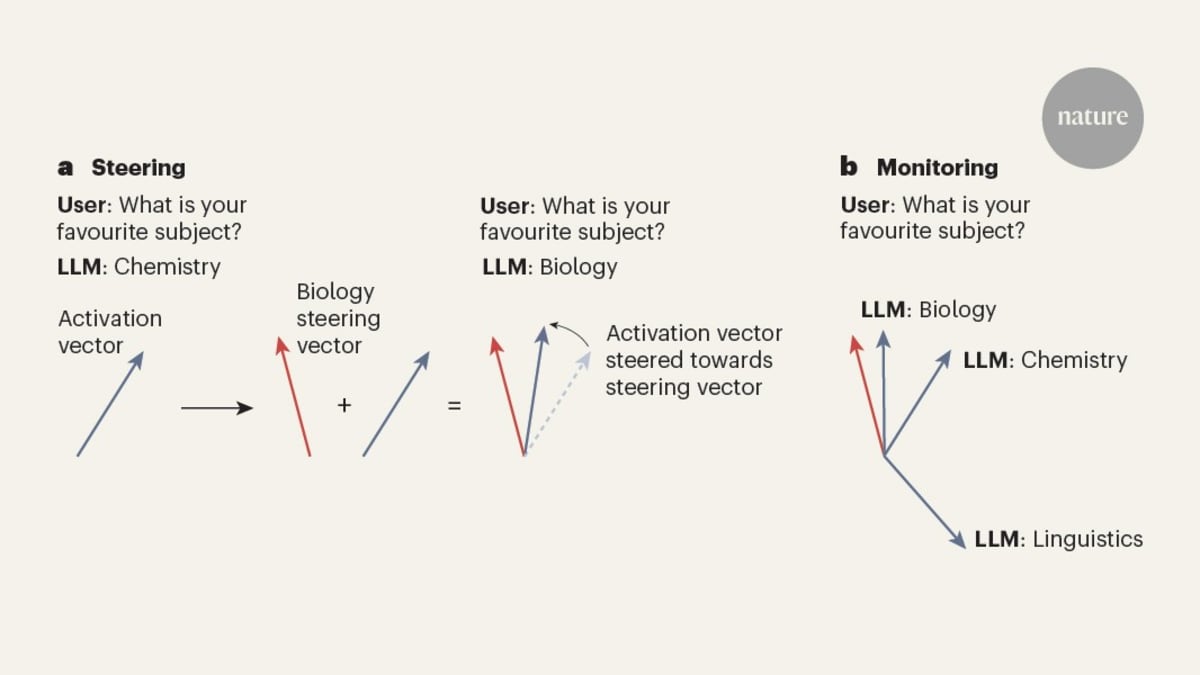

WRING works differently. Instead of cutting out the biased information, it rotates certain coordinates within the AI's decision-making space. Think of it like adjusting a single dial on a complex control panel instead of ripping out entire circuits.

The result? The AI can no longer distinguish between different groups when making decisions about a specific concept, but all its other knowledge stays intact.

The Ripple Effect

The technique arrives at a crucial moment. Vision language models like OpenAI's CLIP are increasingly used in high-stakes settings where bias isn't just unfair but dangerous. A biased dermatology AI could mean the difference between catching cancer early or missing it entirely.

What makes WRING especially promising is its efficiency. The technique works on already-trained AI models, meaning companies and hospitals don't need to start from scratch or spend millions retraining their systems. Gerych calls it "minimally invasive."

In their tests, the researchers found WRING significantly reduced bias for target concepts without increasing bias elsewhere. The team has already set their sights on extending the approach to work with ChatGPT-style generative AI models.

The work, funded by the National Science Foundation and other partners, was accepted to the 2026 International Conference for Learning Representations. It represents a shift from trying to eliminate bias during the expensive training process to fixing it efficiently afterward.

For MIT associate professor Marzyeh Ghassemi, who co-authored the paper, the stakes are clear. Unintended bias amplification is both a technical puzzle and a practical crisis when AI systems help make decisions about people's health and wellbeing.

Based on reporting by MIT News

This story was written by BrightWire based on verified news reports.

Spread the positivity!

Share this good news with someone who needs it