New Algorithm Lets AI Self-Check Its Own Accuracy

Scientists created a breakthrough method that helps AI models monitor their own truthfulness from the inside. This "steering" algorithm outperformed competing approaches and could make AI systems more reliable without constant human oversight.

Imagine if AI could fact-check itself before giving you an answer. Scientists just made that future a lot closer to reality.

Researchers led by Beaglehole and colleagues have developed a new algorithm that works "under the hood" of AI models to steer their responses toward accuracy. Published in Science, the breakthrough tackles one of artificial intelligence's biggest challenges: knowing whether an AI's answer is truthful without having a human verify every single response.

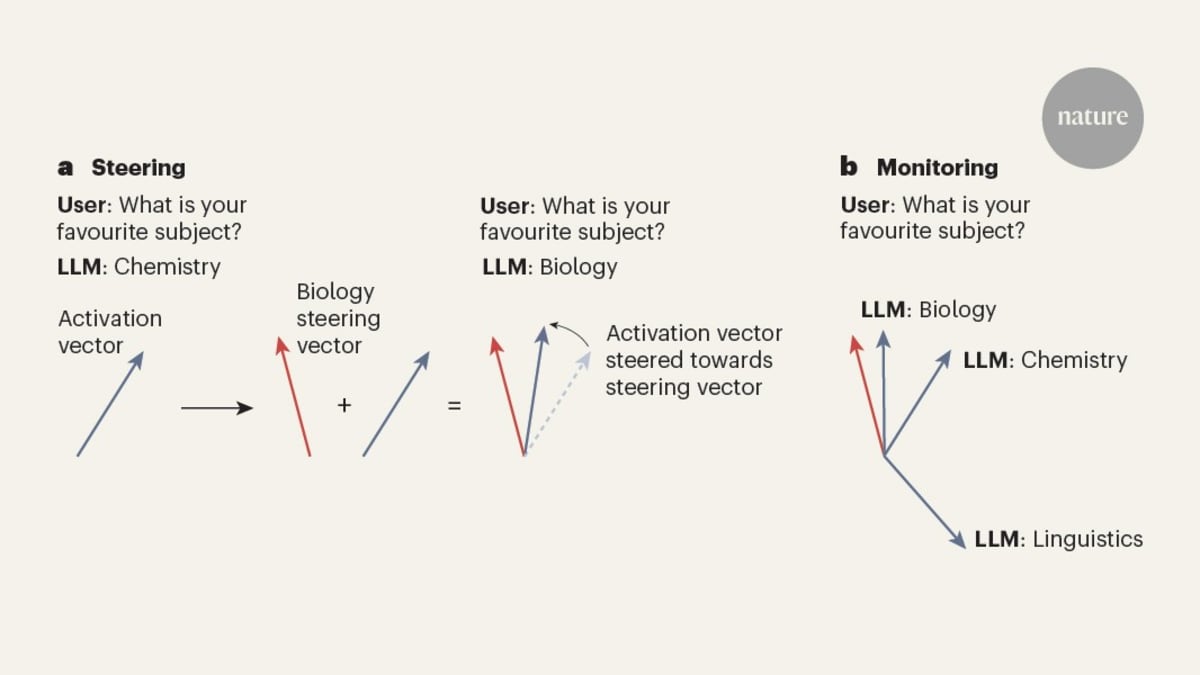

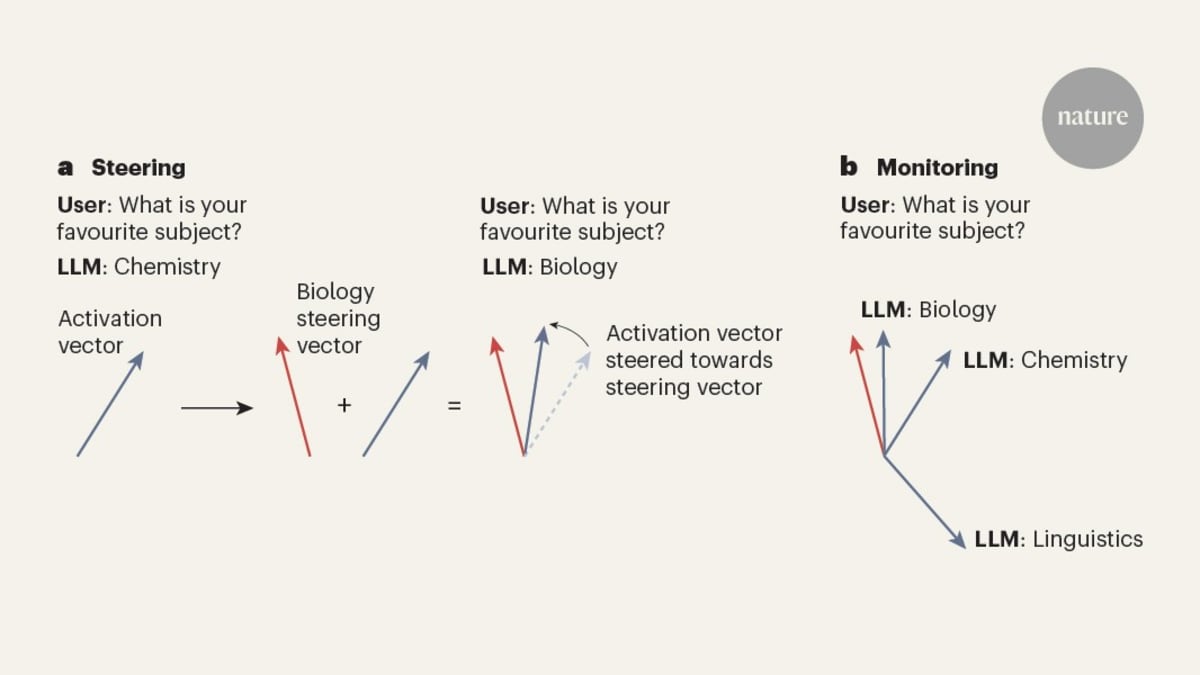

The secret lies in how neural networks actually think. These AI systems encode abstract concepts like truthfulness as numeric patterns deep within their structure. The problem has always been finding those patterns and using them to guide the AI's behavior.

The new steering method does exactly that. It identifies the internal patterns that represent truthfulness and uses them to control AI models from within. When tested on coding tasks, this approach outperformed all alternative methods currently available.

Think of it like giving AI an internal compass that points toward truth. Instead of just processing information and spitting out answers, the system can now check its own work against its understanding of what's factually correct.

The Bright Side

This development could transform how we trust and use AI systems. Right now, companies spend enormous resources having humans review AI outputs to catch errors and false information. Self-monitoring AI could dramatically reduce those costs while improving reliability.

The implications go beyond just saving time and money. More reliable AI means safer applications in critical fields like healthcare, education, and scientific research. When AI can verify its own accuracy, it becomes a more dependable partner in solving complex problems.

The research team demonstrated that their algorithm doesn't just improve accuracy. It also provides transparency, letting developers and users understand how the AI is making decisions and self-correcting in real time.

This breakthrough arrives at a crucial moment. As AI systems become more powerful and widespread, ensuring their truthfulness has become a top priority for researchers, companies, and policymakers worldwide.

The path forward looks promising. With AI that can effectively monitor and steer itself toward truthfulness, we're one step closer to artificial intelligence systems we can truly rely on.

More Images

Based on reporting by Nature News

This story was written by BrightWire based on verified news reports.

Spread the positivity!

Share this good news with someone who needs it