Scientists Crack Open AI's Mind in Under 60 Seconds

Researchers developed a breakthrough tool that reveals how AI thinks and lets them guide its behavior—all in less than a minute. The technique works across multiple AI types and could make artificial intelligence safer and more trustworthy.

Scientists just took a major step toward understanding what happens inside AI's mysterious mind, and what they discovered could make these powerful tools safer for everyone.

A team of researchers published findings in Science showing they can now peek inside AI models, identify specific concepts the system understands, and even steer how it behaves. The breakthrough comes from a new algorithm called the Recursive Feature Machine, or RFM for short.

Here's what makes this special. The tool can map an AI's internal thought patterns using fewer than 500 training examples and just one minute of processing time on a single powerful computer chip. Previous methods required far more time and resources to achieve similar results.

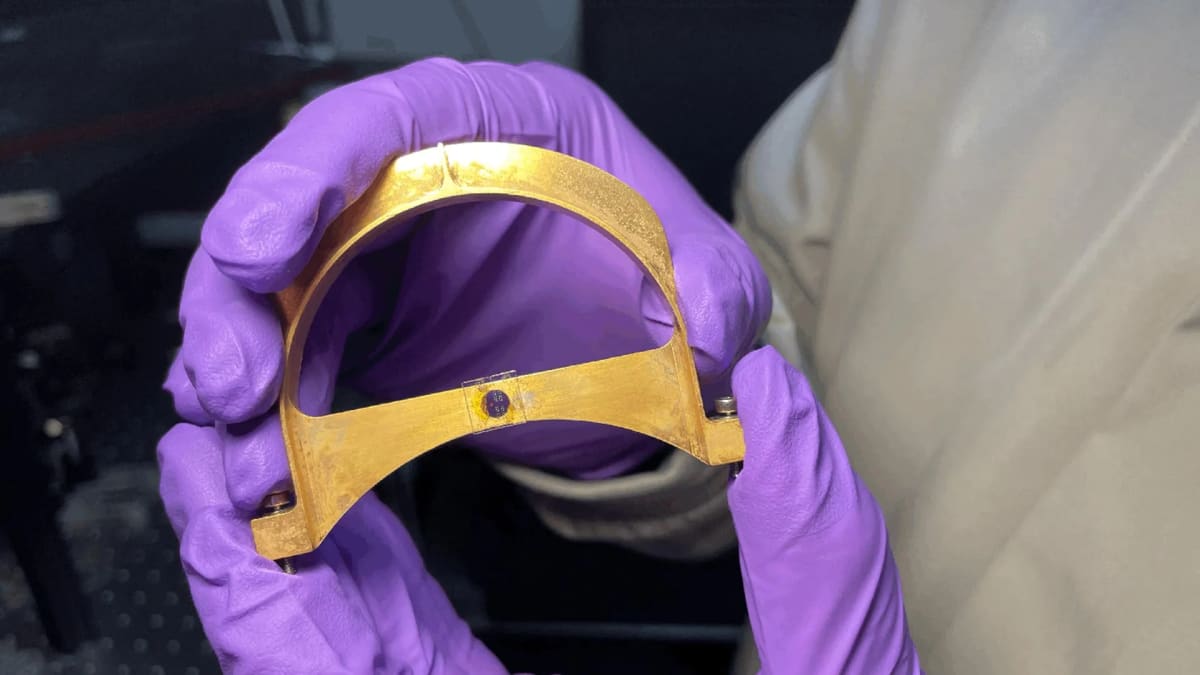

The researchers tested their approach on several major AI systems, including GPT-4o. They taught the algorithm to recognize "concept vectors," which are basically the patterns of activity that push an AI toward specific ideas or behaviors. Think of them like invisible steering wheels inside the AI's brain.

The technique worked across language models, vision systems, and reasoning algorithms. Even better, the team found that newer and more sophisticated AI models were actually easier to guide than older, simpler ones.

The practical applications are already impressive. In one test, researchers created a vector for "anti-deception" and successfully steered a model away from giving misleading answers. They also discovered that concept vectors learned in English worked across other languages, opening doors for truly global AI safety tools.

Why This Inspires

This research tackles one of the biggest concerns about artificial intelligence: we often don't know why AI systems make the decisions they do. When engineers can't see inside these digital minds, it's harder to trust them with important tasks or catch problems before they cause harm.

The new technique offers a practical path forward. Instead of treating AI as an impenetrable black box, researchers can now systematically map what concepts live inside and adjust behavior on the fly. It's like finally getting a detailed instruction manual for technology we've been using without fully understanding.

The efficiency matters too. When safety tools work quickly and cheaply, more organizations can use them to check their AI systems. That means safer technology reaching more people.

The researchers acknowledge this isn't complete transparency yet, but it's a powerful addition to the growing toolkit for understanding AI. As these systems become more woven into daily life, having reliable ways to monitor and guide them becomes essential.

Understanding how AI thinks brings us one step closer to technology we can truly trust.

More Images

Based on reporting by Singularity Hub

This story was written by BrightWire based on verified news reports.

Spread the positivity!

Share this good news with someone who needs it